By Kishore Desai

The World Bank recently released its Doing Business (DB) report for the year 2018. Published annually, this report is widely consideredas a good reference documentto examinehow easy it is to do business in a particular country. The reportobjectively assessesthe extent of simplicity, clarity, predictability and enforceability ofregulations within which businesses operate on a day-to-day basis. Economies are ranked on the basis of this assessment; a higher rank reflecting a comparatively easier environment for businesses to operate.

The 2018 DB assessment was particularly special for India.Ranked 100 (out of 190 countries), India reported its best ever performance this year. Further, in terms ofa year-on-year change, the country also saw the highest improvement across all economies (a jump of 30 ranks from 2017 to 2018).One may recall here that, in 2017, India’s rank improved marginally as compared to that in 2016. Critics had used that opportunity to come down heavily on the efficacy of government’s policies and initiatives. They had argued that the 2017 DB findings showed that doing business remained as difficult as earlier;that things haven’t improved at the ground-level.As it turns out, critics and analysts get so narrowly fixated with ranks per se that they often tend to ignore the DB fine prints.Some key specifics are argued below.

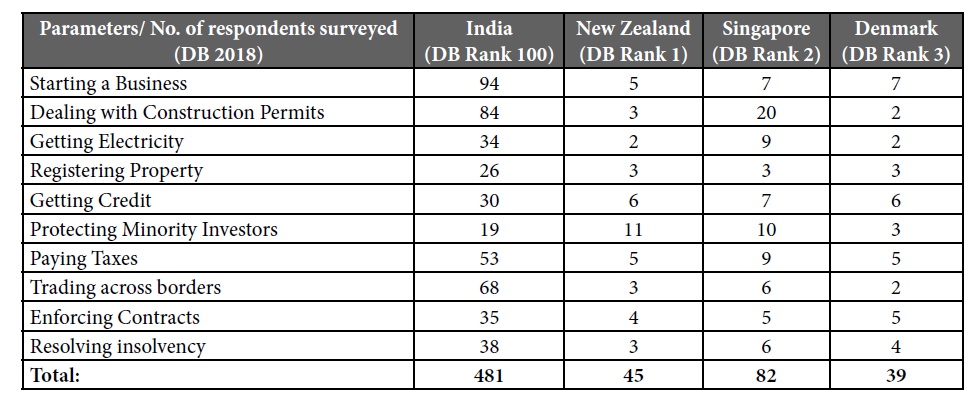

First,the World Bank ranks economies on the basis of scores known as “Distance to Frontier” (DTF)”. DTF measures the distance of an economy to the “frontier” (i.e the best)for a set of 10 broad parameters (some of which include, for example, :a) time and cost to start a business; b) time and cost to get connected to the electricity grid; c) ease of paying taxes etc.).Scores for each parameter is given on a scale of 0 to 100; where 0 denotes the lowest performance and 100 the frontier (i.ethe best). WorldBank also collectsstakeholder feedback for allocating DTF. For this purpose, it administers an objective questionnaire to a set of small and medium sized firms operating locally. While this methodology is fine, the critics tend to overlookthe inter-country variation in sample size of respondents is quite significant. For instance, on an overall basis, the number of respondents surveyed in India for DB 2018 totaled 481. In contrast, the number of respondents surveyed in New Zealand totaled 45, Singapore 82 and Denmark 39.Further, a parameter-wise assessment shows that, in many cases, scores were provided on the basis of responses from as few as 2 to 3 firms. Such large variation puts India at a disadvantage as compared to countries like New Zealand and Singapore. This also increases the judgment error as DTF score is influenced by the feedback of all respondents. The table below substantiates the above observation.

Second, a closer look at the respondents suggests that they come from a very narrow selection of business. Take for instance the firms chosen for responding to questionnaire related to ease of “starting a business”. Such firms need to fulfill all of the following: a) be a limited liability company’ b) operate in Delhi/Mumbai with an office space of approx 929 sqm (10,000 square feet); c) be 100% domestically owned;with at least 5 owners and a start-up capital of 10 times income per capita and turnover of at least 100 times income per capita; d) haveatleast 10 and upto 50 employees one month after the commencement of operations, all of whom should be domestic nationals. And this list is not even exhaustive. The bank has its own reasons for such a narrow selection of respondents. But given the diverse profile of India’s businesses (ranging from sole proprietorships, micro, small, medium and large scale), the above obviously impairs the quality of assessment.

Third, the DB report normally collects data for the largest business city of an economy, except for 11 countries that have a population of more than 100 million it collects data for the second largest business city as well. For India, data is collected for Mumbai and Delhi. Thisdata is treated as representative of the entire country. Lastly, World Bank also keeps updating its methodology and considers only a select range of economic activities and sectors. From that perspective, ranks do not reflect improvements in enablers such as transport connectivity, commercial law etc.and are usually not comparable across years.

Considering these points, the above arguments mean thatchanges in rankings for Indiashould be seen in the context of larger reform programs being rolled out rather than isolated policy actions. What this essentially means is that a steep 30 position jump in 2017 (vis-à-vis 2016) can be explainedthrough actions taken not only in 2016 but even before. Thispotentially reflects the impact of a multi-year reform momentum that started in 2014targeting re-engineering business processes, expanding and decongesting infrastructure connectivity and increasing digitization in governance.These reforms were there in 2016 as well when the government was criticizedfor a marginal improvement in the same study. The criticism, however, was misplaced as it looked at DB ranks in isolation and not in the broadercontext of such structurallong-term reforms being implemented in the country. Therefore critics need to be careful while drawing sweeping conclusions. They risk presenting an incompletepicture and misinforming public if they do not see DB ranks in the larger context of governance and reform direction.

(Writer is an Officer on Special Duty, NITI Aayog)

(The views expressed are the author's own and do not necessarily reflect the position of the organisation)